The AI Never Told Me: What the FDA's Warning Letter on AI Actually Means

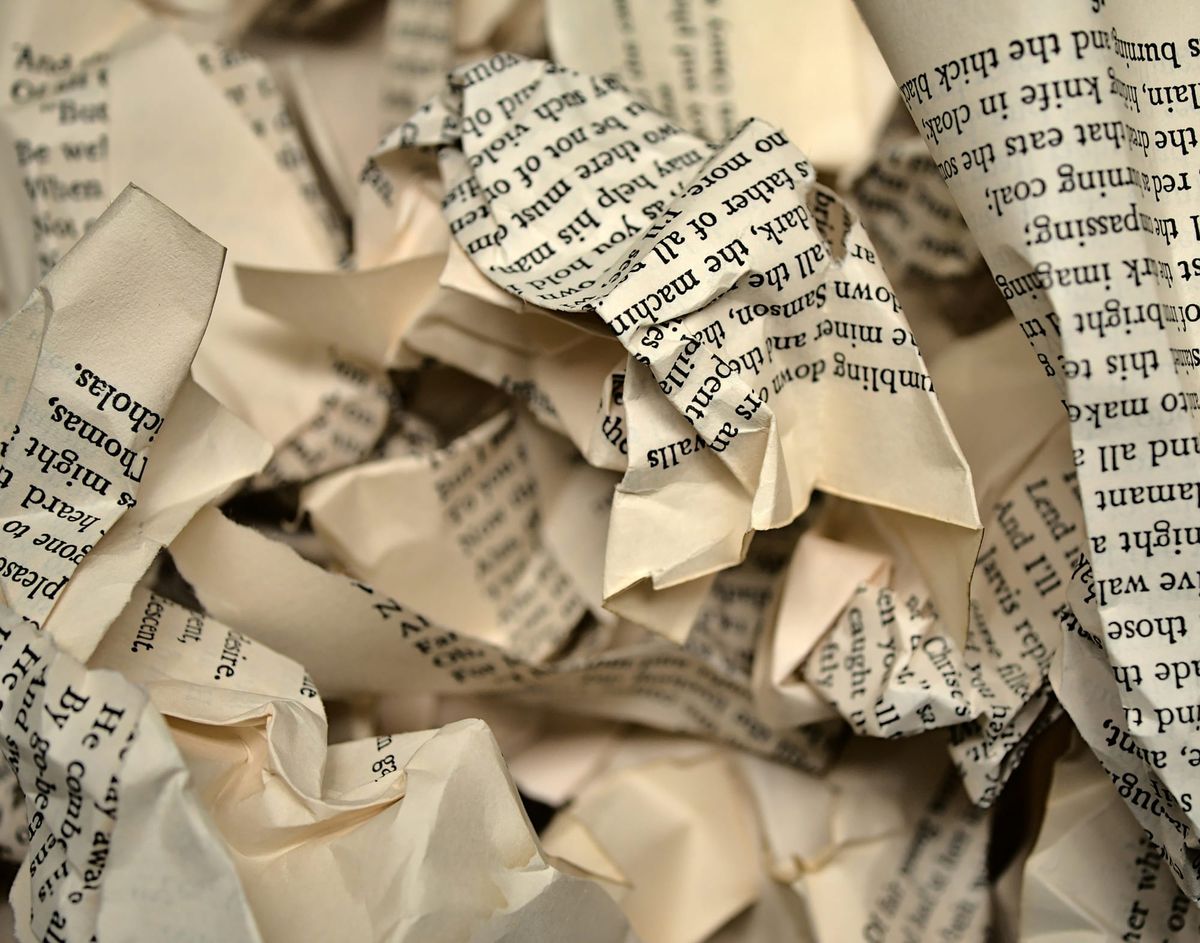

A few weeks ago, the FDA sent a warning letter to Purolea Cosmetics Lab. It's been getting a lot of attention, mostly framed as a warning shot at AI in pharmaceutical manufacturing. "FDA says AI is a liability." That kind of thing.

I want to offer a different read. Not because the AI angle isn't important (it is), but because the full letter provides a lot of context that the headlines leave out, and that context actually makes the lesson sharper.

Let me start with what the letter is really about.

What was actually going on at this facility

Before we get to AI, it's worth reading the full picture. This wasn't a sophisticated operation that happened to make a technology misstep. The FDA found insects, filth, and leaves in production areas. Drug products were being released without any microbiological testing. Components weren't tested for identity, purity, or quality. Two products on the market were unapproved new drugs making claims about treating shingles and genital herpes. The quality unit had effectively stopped functioning.

This is a facility where compliance had broken down across almost every dimension. The company has since ceased drug production.

I'm not pointing this out to minimize the AI section. I'm pointing it out because it matters for how you read the AI section. This wasn't a well-run company that got tripped up by a novel technology. This was a company that was cutting corners everywhere, and AI was one of those shortcuts. Understanding that changes the lesson.

The line I haven't been able to stop thinking about

In the AI section of the letter, the FDA notes that the company used AI agents to generate drug product specifications, procedures, and master production and control records. When investigators found that the company hadn't conducted process validation before distributing drug products, they informed Purolea of the requirement under 21 CFR 211.100.

The company's response: they weren't aware of the legal requirement, because the AI agent they used never told them it was required.

Sit with that for a second.

In a facility that wasn't testing products, wasn't reviewing batch records, and had insects in the manufacturing area, the company's answer to a fundamental CGMP requirement was: "The AI didn't mention it."

What the FDA is actually saying

The FDA's response, grounded in 21 CFR 211.22(c), is that the Quality Unit is always responsible for reviewing AI-generated documents to ensure they are accurate and actually compliant with CGMP. That obligation doesn't transfer to an AI. It never did.

I want to be clear about this because I've seen it misread: the FDA is not anti-AI. The letter explicitly acknowledges that AI can be used as an aid in document creation. What the FDA is saying is that if you use it that way, a qualified human has to review what it produces before it goes into your QMS. That's not a new standard. That's the same standard that has always applied to any document in your quality system, regardless of who or what created it.

The same way you'd review a consultant's SOPs before filing them, you review AI output before relying on it. The fact that the generator was software doesn't change who is responsible for the output's accuracy.

The FDA is telling you AI can help. They're also telling you that "the AI did it" is not an answer to a 483 observation.

The real problem: outsourced judgment

Here's what I think is the most important thing in this case, and I haven't seen many people talk about it directly.

Purolea wasn't just using AI to draft documents. They were using AI for regulatory intelligence, to determine what requirements applied to them in the first place. When a requirement didn't surface in the AI's output, they assumed it didn't apply.

That is a fundamentally different and much higher-risk use case.

Using AI to draft a procedure you already know needs to exist, within a process a qualified person has already defined? Reasonable, with proper review.

Using AI to determine what procedures need to exist at all? That requires judgment the tool doesn't have. Not because AI is bad at generating text, but because regulatory intelligence isn't just information retrieval. It's context. It's interpretation. It's understanding not just what the regulations say, but how they've been applied and enforced across different product types, facility sizes, and inspection histories. No general-purpose AI agent has that, and the ones that don't disclose that gap are genuinely dangerous to rely on.

The tool is only as good as the expertise behind it. An AI that hasn't been grounded in the right requirements from the start isn't a compliance tool. It's a confidence generator. And in a regulated environment, confidence without accuracy is exactly the kind of thing that ends with a warning letter.

What good AI-assisted compliance actually looks like

The right question here isn't "should we use AI?" It's "are we using AI where it genuinely adds value, with the right expertise and oversight in the loop?"

We think about this in two layers.

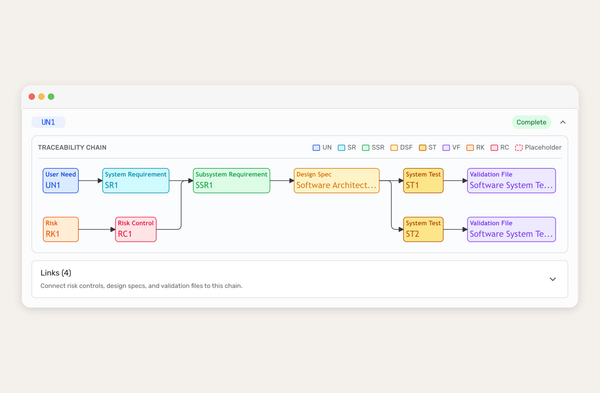

The first is process design. Before any AI does anything in our system, qualified experts define the right requirements and build a compliant workflow. The AI runs within that framework. It executes within expert-designed boundaries and doesn't determine what those boundaries are. "Expert-led, AI-enabled" is both a phrase we use for marketing and it's a design principle that directly addresses the failure mode in this letter.

The second is quality oversight. The FDA explicitly invokes 21 CFR 211.22 for a reason: your Quality Unit is the essential check on every document in your QMS, including anything AI helped create. We build our agents to support that review process, not to route around it. The goal is audit-ready output that a qualified person can efficiently review and approve. That's what actually holds up under inspection.

Good AI in this space can do both things Purolea was trying to do, generate documents and support regulatory intelligence. But it has to be grounded in expert knowledge from the start, with quality oversight as a core feature, not an afterthought. Purolea wasn't using the wrong concept. They were cutting corners across the board, AI included, and skipping the oversight layer that makes any of it work.

The FDA has now put this in writing. Quality is responsible for what's in your system, regardless of how it got there. That's a predictable place for the agency to land. The surprise isn't what they said. The surprise is that it needed to be said.

Where this is going

This won't be the last time AI comes up in a warning letter. As more companies reach for AI tools to manage compliance workload, the FDA will be watching how those tools are implemented, reviewed, and controlled.

The companies that get this right will be the ones that start with the right requirements, build AI into a qualified process, and treat quality oversight as non-negotiable. Not the ones that hand a general-purpose AI agent the keys and assume it knows the regulations better than they do.

If you're building out your quality and regulatory processes and want to understand where AI can genuinely help versus where it creates risk, that's exactly what we've built to answer that call.

Interested to hear more? Talk to us.